Stop automating your clinical blind spots

How to fix trial diversity, catch AI hallucinations, and close the loop on wearables.

HT4LL-20260324

Hey there,

Are we blindly deploying algorithms that perfectly replicate our own clinical blind spots?

You are under immense pressure to deploy AI to speed up recruitment, streamline triage, and analyze wearable data. But every time you roll out a new model, you hit a wall of operational anxiety. Will the generative AI hallucinate a diagnosis? Will the wearable data actually translate to better at-home patient care, or just drown us in noise and app fatigue? We are so obsessed with the speed of AI that we are ignoring the sociotechnical safeguards required to make it trustworthy and equitable in the real world.

Today, we are looking at the hard guardrails needed to build AI that actually works in the messy real world.

How AI solved a rare disease trial’s diversity problem in one week.

Why “sociotechnical governance” and UI warnings cut human-AI errors.

The dangerous gap between passive wearable data and human workflow integration.

If you’re trying to move your digital strategy from “hype-driven pilots” to equitable, regulatory-grade execution, then here are the resources you need to dig into to secure that competitive advantage:

Weekly Resource List:

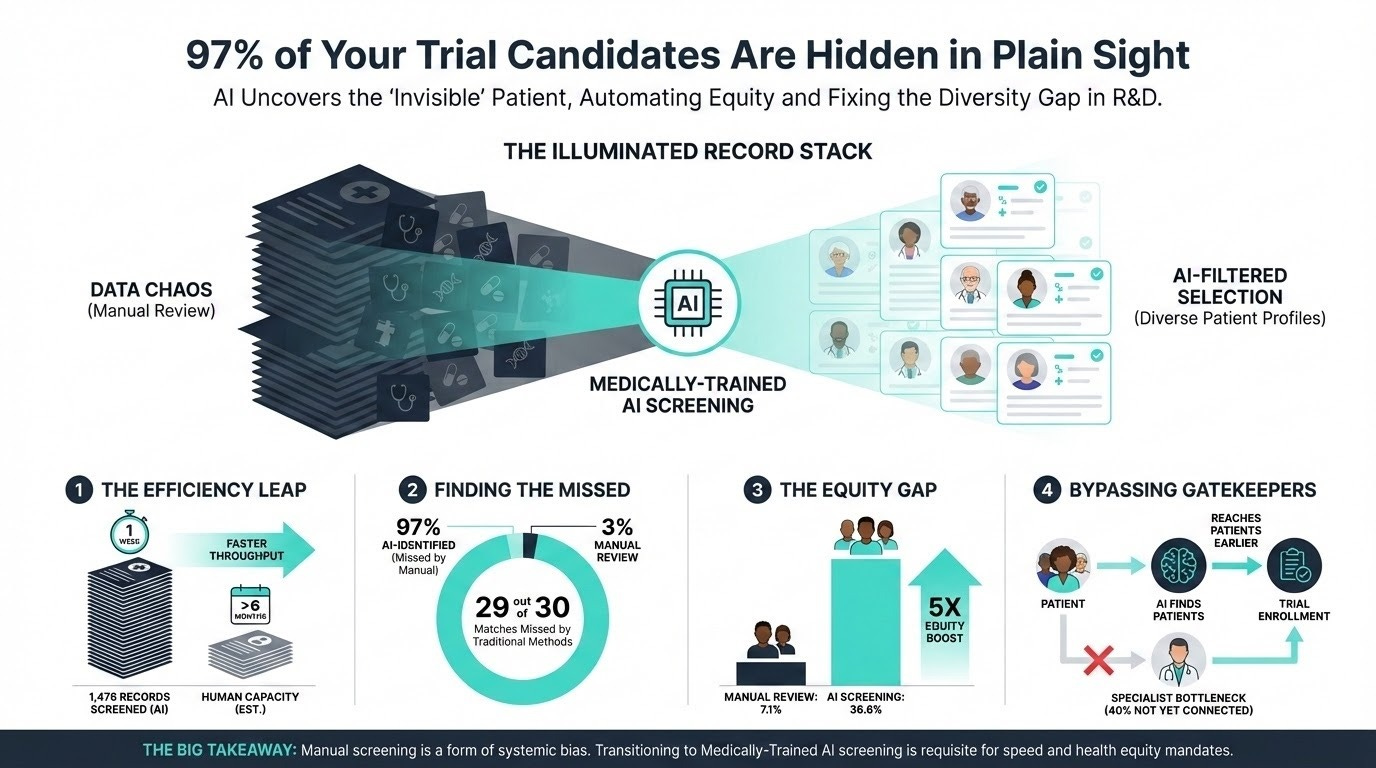

Automating Rare Disease Trial Enrollment (10 min read): Cleveland Clinic used a medically trained large language model to screen 1,476 electronic medical records for an ATTR-CM trial.

The Takeaway: It achieved 96.2% accuracy, but the real win was equity. The AI identified 36.6% Black patients versus just 7.1% from routine manual screening, proving AI can shatter recruitment bottlenecks and systemic biases simultaneously.

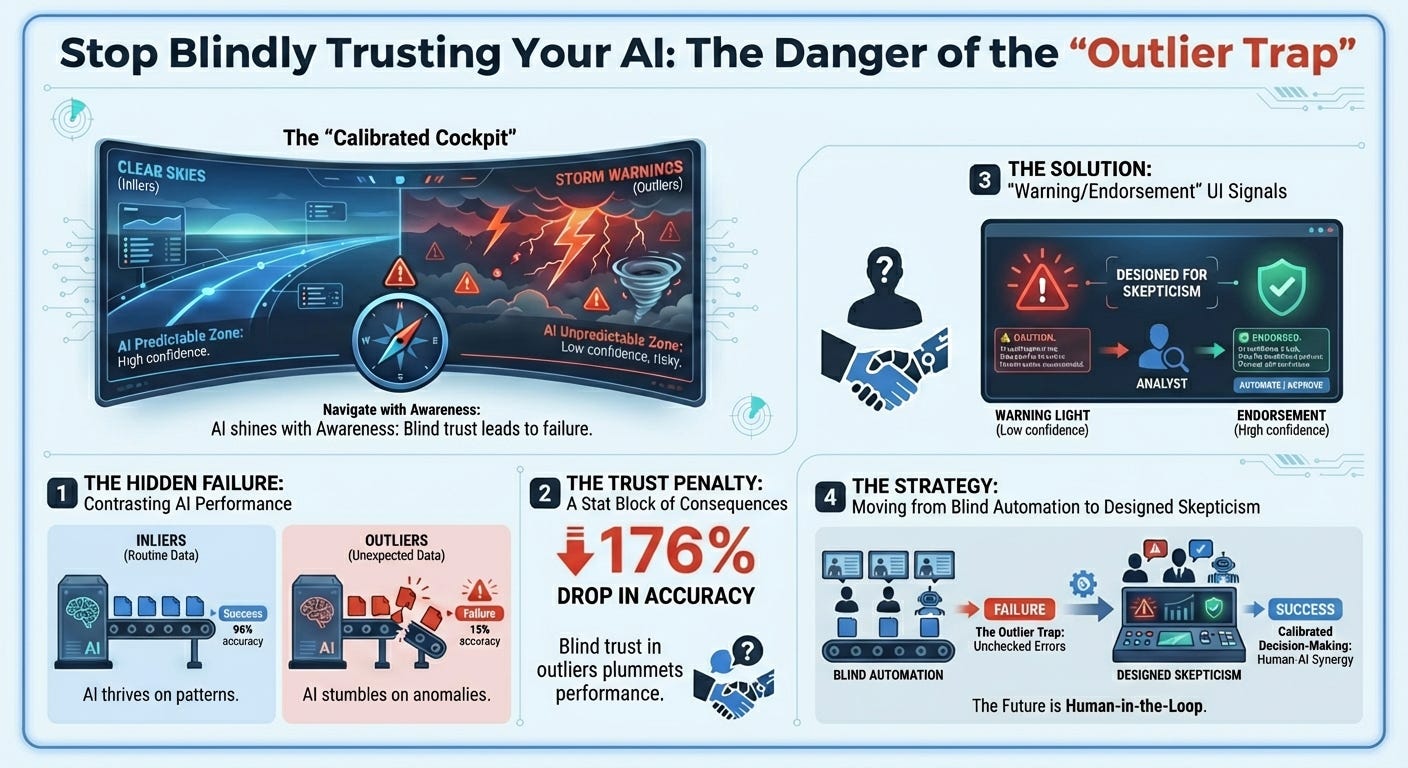

Catching Gen AI’s Bad Advice (12 min read): Harvard Business School research on human-AI collaboration and forecasting.

The Takeaway: Humans blindly trust confident algorithms, suffering from “naive adjusting behavior” when evaluating outlier data the AI isn’t trained on. Adding simple UI alerts—warnings for unusual data and endorsements for familiar data—cut user errors by nearly half (49%). Calibrated trust is better than blind trust.

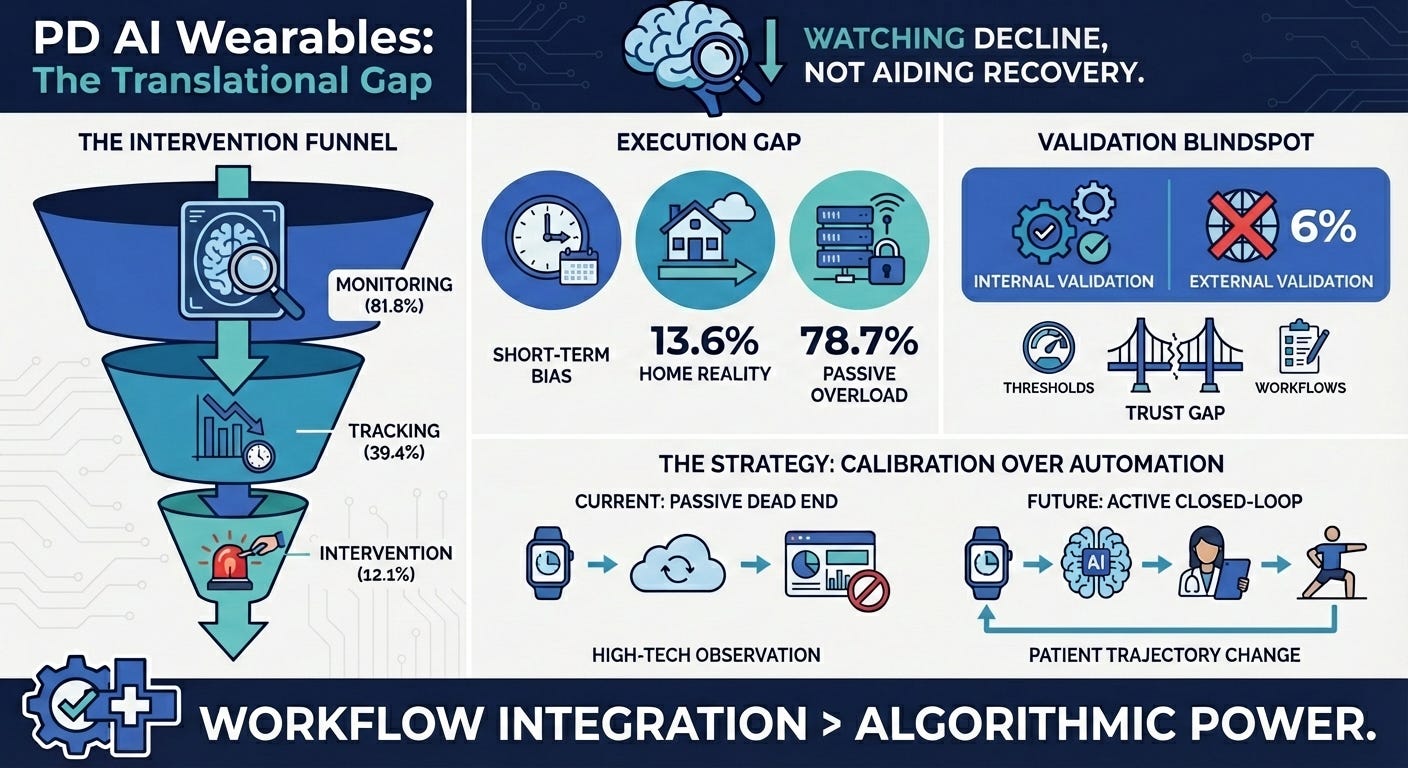

AI Wearables in Parkinson’s Disease (15 min read): A scoping review of 66 studies on AI-enabled wearables.

The Takeaway: The industry is stuck in the “assessment” trap. Most wearables passively monitor patients in clinical settings, but rarely translate into closed-loop, at-home rehabilitation interventions. We need to move from generating passive data to generating active clinical feedback.

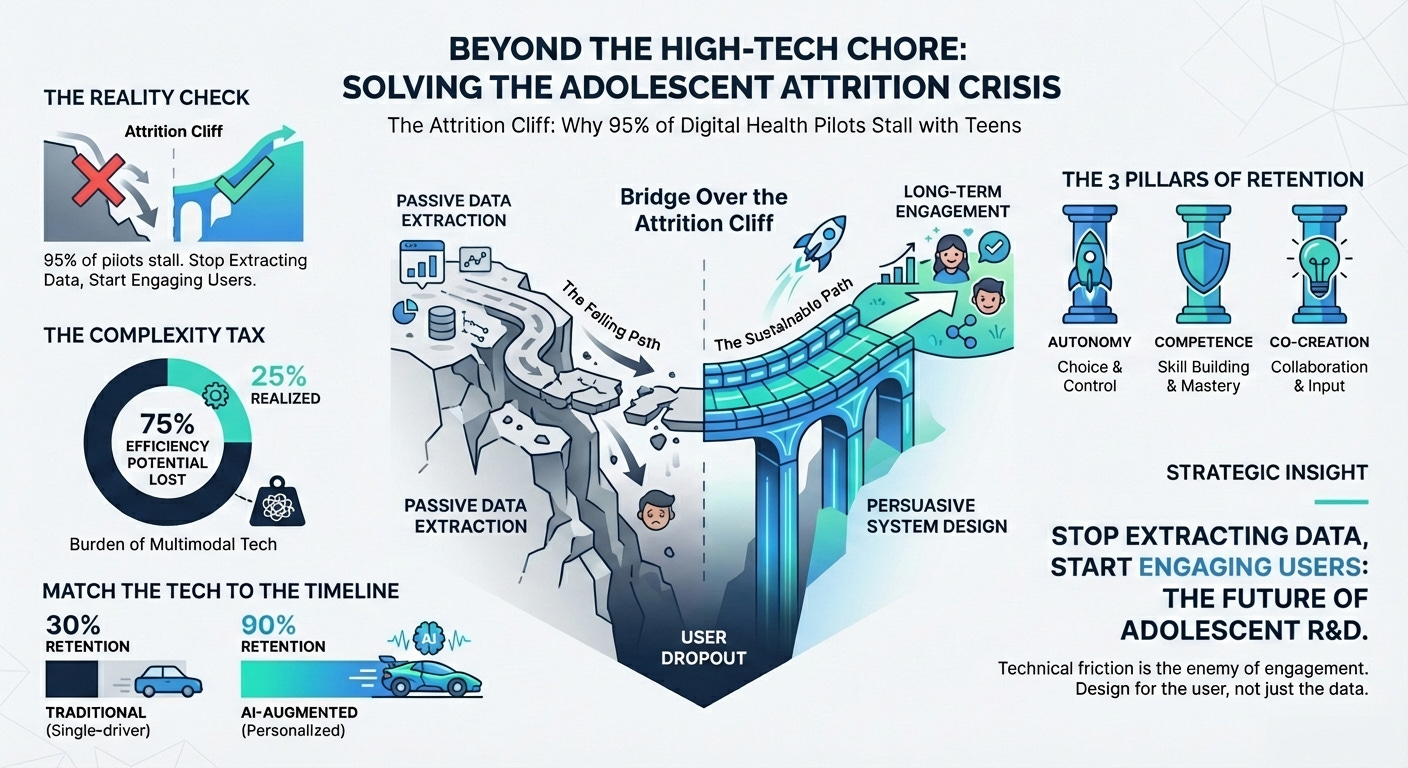

Digital Interventions for Adolescent Activity (15 min read): A systematic review of digital health interventions for youth.

The Takeaway: While multimodal apps improve activity, attrition is a massive challenge due to “tech dependency” and lack of personalization. If your digital endpoint creates too much technical friction for the patient, you will lose your longitudinal data.

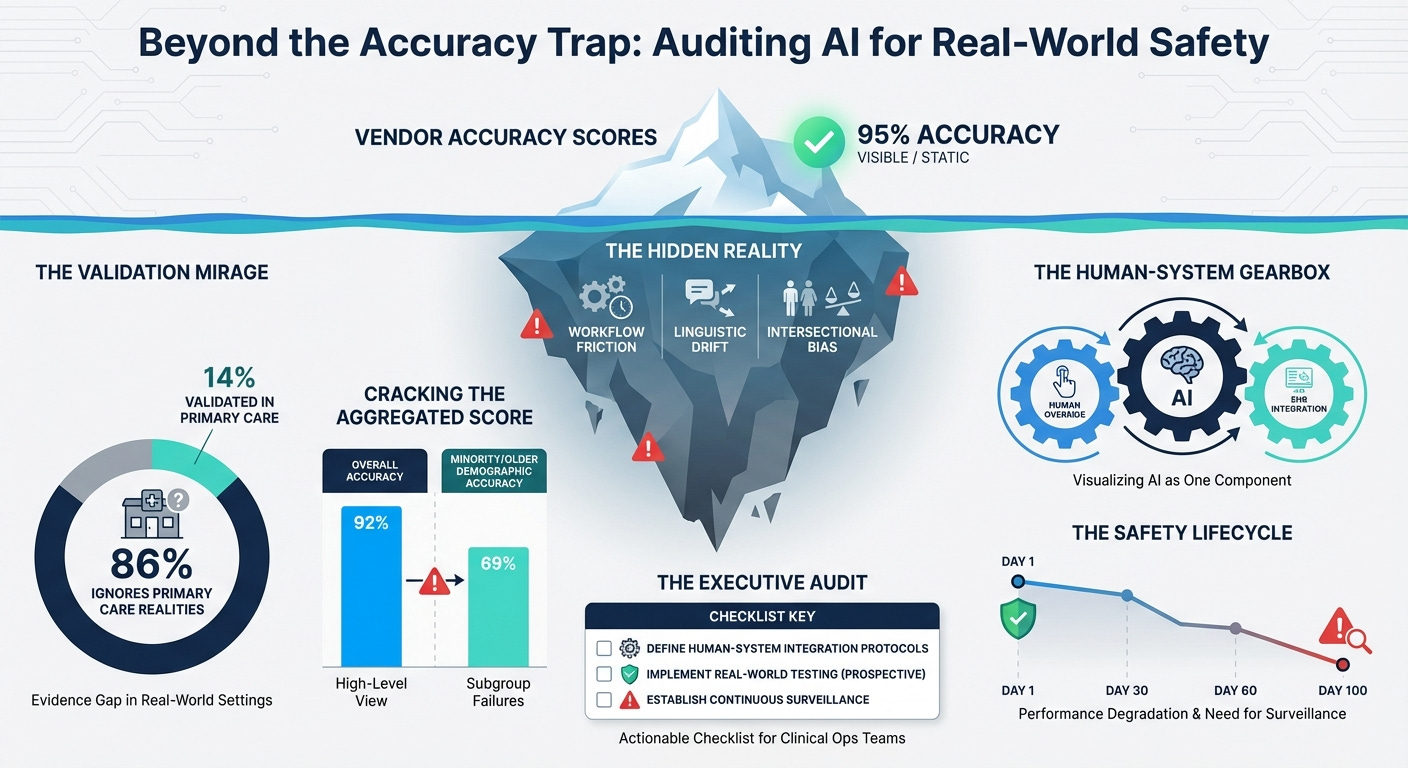

AI Triage and Real-World Equity (12 min read): A critical look at primary care AI triage systems.

The Takeaway: Most AI triage is validated on retrospective or emergency data, ignoring real-world workflows. Worse, they lack “equity-stratified” reporting. If you don’t actively measure algorithmic fairness across intersecting demographics

3 Things To De-Risk Your Clinical AI Strategy

In order to achieve a scalable, trustworthy AI pipeline, you’re going to need a handful of things: sociotechnical governance, continuous equity auditing, and human workflow integration.

1. Design for “Sociotechnical” Trust

You must pair user interface warnings with rigorous sociotechnical governance and clinical override protocols.

The Harvard Business School study proves that humans trust AI too much when it evaluates outlier data it wasn’t trained on. However, a warning light on a dashboard is useless if the clinical staff is organizationally pressured to follow throughput metrics instead of their intuition. True calibrated trust requires “explainable AI” that provides the specific clinical drivers for an escalation, clear uncertainty indicators, and explicit “override required” prompts for edge cases. You must actively train your investigators to override the system when human judgment contradicts the machine’s confidence. Safety comes from organizational culture and human-AI collaboration, not just model accuracy.

2. Mandate Continuous “Post-Market” Equity Surveillance

You need to move past pre-purchase vendor checklists and mandate continuous “post-market surveillance” for equity.

The Cleveland Clinic rare disease study showed the massive upside of AI—jumping from 7.1% to 36.6% Black participant identification. But as the primary care triage paper highlights, an equitable model on day one can become a biased model by day 100 due to changes in case-mix, local linguistic variations, or privacy laws that blind systems to emerging biases. You must build continuous “drift detection” and equity dashboards into your clinical operations. Use frameworks like PROGRESS-Plus to continuously monitor real-world incident reporting and intersectional subgroup calibration (e.g., age x ethnicity) to ensure you aren’t widening disparities post-deployment.

3. Integrate Sensors Directly Into Human Workflows

You must stop bombarding patients with app notifications and start integrating sensor data directly into human clinical workflows.

The Parkinson’s wearable review revealed that we deploy sensors to watch patients deteriorate, but we struggle to close the loop at home. Attempting to close this loop by simply adding more gamification or push notifications drives massive attrition, as seen in the adolescent digital health review, which warns of “technological dependency” and technical fatigue. If your digital endpoint requires high technical maintenance from the patient, they will drop out. The loop must be closed by integrating wearable data directly into nurse-led follow-ups and clinical dashboards, allowing human care teams to intervene exactly when the data flags a deterioration. Minimize the patient’s technical friction by letting the clinician close the loop

PS...If you're enjoying Healthtech for Lifescience Leaders, please consider referring this edition to a friend.

And whenever you are ready, there are 2 ways I can help you:

The AI-Augmented Leader Email Course: Sign-up for my free 5-day email course on how to become an AI Augmented Leader in Lifesciences.

Strategic Roadmap Design: Translate your priorities across different parts of the organization into a coordinated and clear roadmap in 2026. Book time on my calendar to discuss this further.